Accuracy and precision

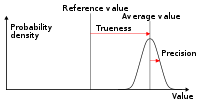

The International Organization for Standardization (ISO) defines a related measure:[1] trueness, "the closeness of agreement between the arithmetic mean of a large number of test results and the true or accepted reference value."

While precision is a description of random errors (a measure of statistical variability), accuracy has two different definitions: In simpler terms, given a statistical sample or set of data points from repeated measurements of the same quantity, the sample or set can be said to be accurate if their average is close to the true value of the quantity being measured, while the set can be said to be precise if their standard deviation is relatively small.

[3][4] Although the two words precision and accuracy can be synonymous in colloquial use, they are deliberately contrasted in the context of the scientific method.

The field of statistics, where the interpretation of measurements plays a central role, prefers to use the terms bias and variability instead of accuracy and precision: bias is the amount of inaccuracy and variability is the amount of imprecision.

The terminology is also applied to indirect measurements—that is, values obtained by a computational procedure from observed data.

In numerical analysis, accuracy is also the nearness of a calculation to the true value; while precision is the resolution of the representation, typically defined by the number of decimal or binary digits.

[5] A shift in the meaning of these terms appeared with the publication of the ISO 5725 series of standards in 1994, which is also reflected in the 2008 issue of the BIPM International Vocabulary of Metrology (VIM), items 2.13 and 2.14.

[3] According to ISO 5725-1,[1] the general term "accuracy" is used to describe the closeness of a measurement to the true value.

In this case trueness is the closeness of the mean of a set of measurement results to the actual (true) value, that is the systematic error, and precision is the closeness of agreement among a set of results, that is the random error.

ISO 5725-1 and VIM also avoid the use of the term "bias", previously specified in BS 5497-1,[6] because it has different connotations outside the fields of science and engineering, as in medicine and law.

Where not explicitly stated, the margin of error is understood to be one-half the value of the last significant place.

However, reliance on this convention can lead to false precision errors when accepting data from sources that do not obey it.

[9] Accuracy is also used as a statistical measure of how well a binary classification test correctly identifies or excludes a condition.

where TP = True positive; FP = False positive; TN = True negative; FN = False negative In this context, the concepts of trueness and precision as defined by ISO 5725-1 are not applicable.

However, the term precision is used in this context to mean a different metric originating from the field of information retrieval (see below).

Accuracy is sometimes also viewed as a micro metric, to underline that it tends to be greatly affected by the particular class prevalence in a dataset and the classifier's biases.

The validity of a measurement instrument or psychological test is established through experiment or correlation with behavior.

More sophisticated metrics, such as discounted cumulative gain, take into account each individual ranking, and are more commonly used where this is important.