Large margin nearest neighbor

It learns a pseudometric designed for k-nearest neighbor classification.

The algorithm is based on semidefinite programming, a sub-class of convex optimization.

The goal of supervised learning (more specifically classification) is to learn a decision rule that can categorize data instances into pre-defined classes.

The k-nearest neighbor rule assumes a training data set of labeled instances (i.e. the classes are known).

Large margin nearest neighbors is an algorithm that learns this global (pseudo-)metric in a supervised fashion to improve the classification accuracy of the k-nearest neighbor rule.

The main intuition behind LMNN is to learn a pseudometric under which all data instances in the training set are surrounded by at least k instances that share the same class label.

If this is achieved, the leave-one-out error (a special case of cross validation) is minimized.

This generalization is often (falsely[citation needed]) referred to as Mahalanobis metric.

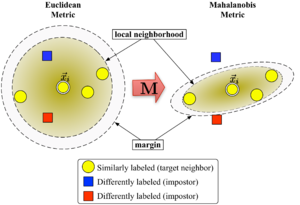

Figure 1 illustrates the effect of the metric under varying

The two circles show the set of points with equal distance to the center

In the Euclidean case this set is a circle, whereas under the modified (Mahalanobis) metric it becomes an ellipsoid.

The algorithm distinguishes between two types of special data points: target neighbors and impostors.

Let us denote the set of target neighbors for a data point

During learning the algorithm tries to minimize the number of impostors for all data instances in the training set.

Large margin nearest neighbors optimizes the matrix

The first optimization goal is achieved by minimizing the average distance between instances and their target neighbors The second goal is achieved by penalizing distances to impostors

The resulting value to be minimized can be stated as: With a hinge loss function

The margin of exactly one unit fixes the scale of the matrix

(together with two types of constraints) replace the term in the cost function.

They play a role similar to slack variables to absorb the extent of violations of the impostor constraints.

The optimization problem is an instance of semidefinite programming (SDP).

Although SDPs tend to suffer from high computational complexity, this particular SDP instance can be solved very efficiently due to the underlying geometric properties of the problem.

In particular, most impostor constraints are naturally satisfied and do not need to be enforced during runtime (i.e. the set of variables

A particularly well suited solver technique is the working set method, which keeps a small set of constraints that are actively enforced and monitors the remaining (likely satisfied) constraints only occasionally to ensure correctness.

LMNN was extended to multiple local metrics in the 2008 paper.

[2] This extension significantly improves the classification error, but involves a more expensive optimization problem.

In their 2009 publication in the Journal of Machine Learning Research,[3] Weinberger and Saul derive an efficient solver for the semi-definite program.

It can learn a metric for the MNIST handwritten digit data set in several hours, involving billions of pairwise constraints.

An open source Matlab implementation is freely available at the authors web page.

Kumal et al.[4] extended the algorithm to incorporate local invariances to multivariate polynomial transformations and improved regularization.