Rectifier (neural networks)

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function[1][2] is an activation function defined as the non-negative part of its argument, i.e., the ramp function: where

This is analogous to half-wave rectification in electrical engineering.

ReLU is one of the most popular activation functions for artificial neural networks,[3] and finds application in computer vision[4] and speech recognition[5][6] using deep neural nets and computational neuroscience.

[7][8][9] It was first used by Alston Householder in 1941 as a mathematical abstraction of biological neural networks.

[10] It was introduced by Kunihiko Fukushima in 1969 in the context of visual feature extraction in hierarchical neural networks.

[11][12] It was later argued that it has strong biological motivations and mathematical justifications.

[13][14] In 2011,[4] ReLU activation enabled training deep supervised neural networks without unsupervised pre-training, compared to the widely used activation functions prior to 2011, e.g., the logistic sigmoid (which is inspired by probability theory; see logistic regression) and its more practical[15] counterpart, the hyperbolic tangent.

Advantages of ReLU include: Possible downsides can include: Leaky ReLU allows a small, positive gradient when the unit is inactive,[6] helping to mitigate the vanishing gradient problem.

[16][17] Parametric ReLU (PReLU) takes this idea further by making

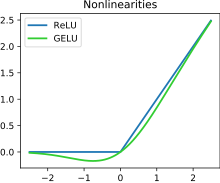

[18] Concatenated ReLU (CReLU) preserves positive and negative phase information:[19] GELU is a smooth approximation to the rectifier: where

This activation function is illustrated in the figure at the start of this article.

It has a "bump" to the left of x < 0 and serves as the default activation for models such as BERT.

This function can be approximated as: By making the change of variables

may be included: The derivative of softplus is the logistic function.

The multivariable generalization of single-variable softplus is the LogSumExp with the first argument set to zero: The LogSumExp function is and its gradient is the softmax; the softmax with the first argument set to zero is the multivariable generalization of the logistic function.

Exponential linear units try to make the mean activations closer to zero, which speeds up learning.

It has been shown that ELUs can obtain higher classification accuracy than ReLUs.

, ELU can be viewed as a smoothed version of a shifted ReLU (SReLU), which has the form

The mish function can also be used as a smooth approximation of the rectifier.

is a hyperparameter that determines the "size" of the curved region near

Squareplus shares many properties with softplus: It is monotonic, strictly positive, approaches 0 as

However, squareplus can be computed using only algebraic functions, making it well-suited for settings where computational resources or instruction sets are limited.

Additionally, squareplus requires no special consideration to ensure numerical stability when